Microsoft • 2017 • Design and prototyping

Voice and UI design for Microsoft’s AI assistant

As a user experience designer for Microsoft’s assistant Cortana, I worked on different modalities for natural language interfaces through text and voice for scenarios for the home and family. I primarily worked on designing scenarios around family time management, sharing digital artifacts with the family, and finding lost mobile devices through speech.

Here’s an example of a voice-only interaction:

Interacting with the assistant through natural language

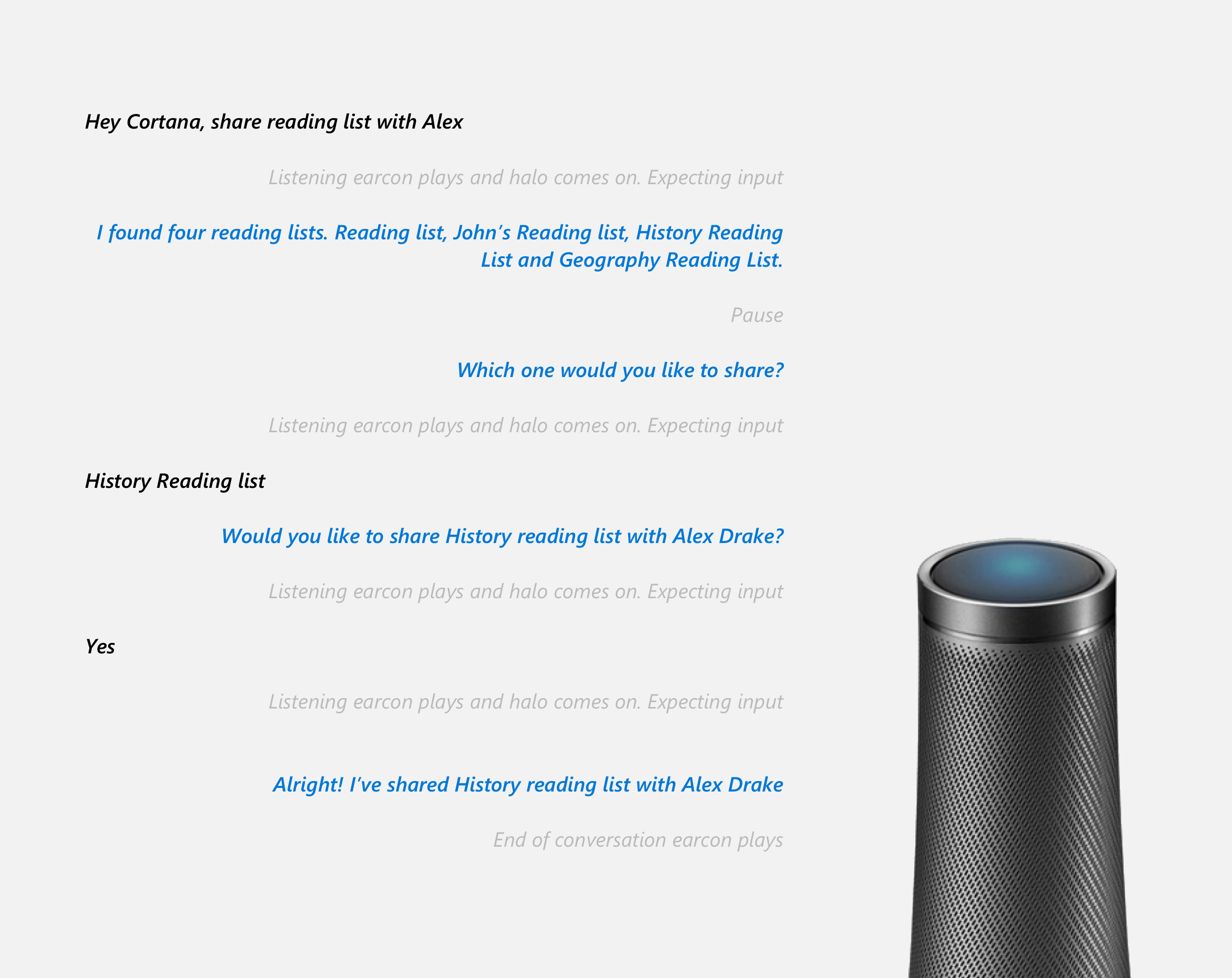

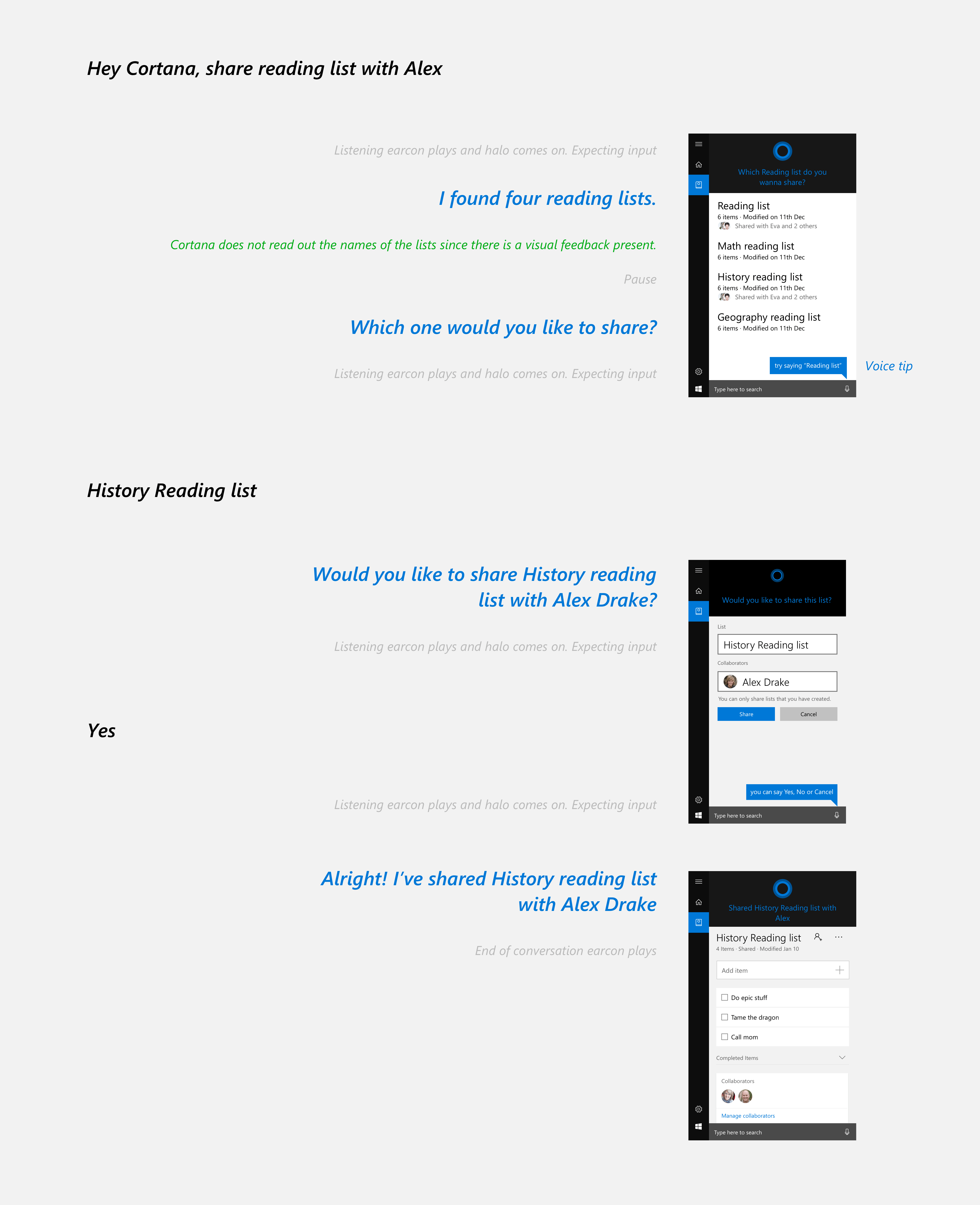

The scenarios were designed to be voice-first ,and I worked on the design of dialogs and dialog/voice flows.

Designing dialogs is similar to writing a script between a user and the assistant. I went through multiple iterations for the dialog design through writing dialogs as well as prototyping conversations so that the assistant doesn’t sound either too human or too much like a bot. Dialogs also needed to be designed keeping in mind the modality of the device.

For example, here’s a dialog for a voice-only interaction

Here’s a dialog when the user has visuals along with voice

Dialog flows

A dialog flow is a schematic representation of all types of dialogs that the assistant would have to tackle. Like designing dialogs, these flows depend on the modality of the device viz. voice, text, visual, etc.

Dialog flows (Voice)

Voice-only dialog flow

Voice + visual dialog flow

Dialog flows (Text)

For situations where a user cannot speak to the assistant (conference rooms, lifts), an alternative method of text flows was designed for devices with a visual modality. In this type of flow, the user can type the command instead of speaking to the assistant.

Text-only dialog flow

GUI flows

Along with the natural language interactions described above, one cannot ignore the advantage of traditional graphical interfaces for complex actions like adding members to a family via email, settings, preferences, and permissions among others.

Adding family members to an account

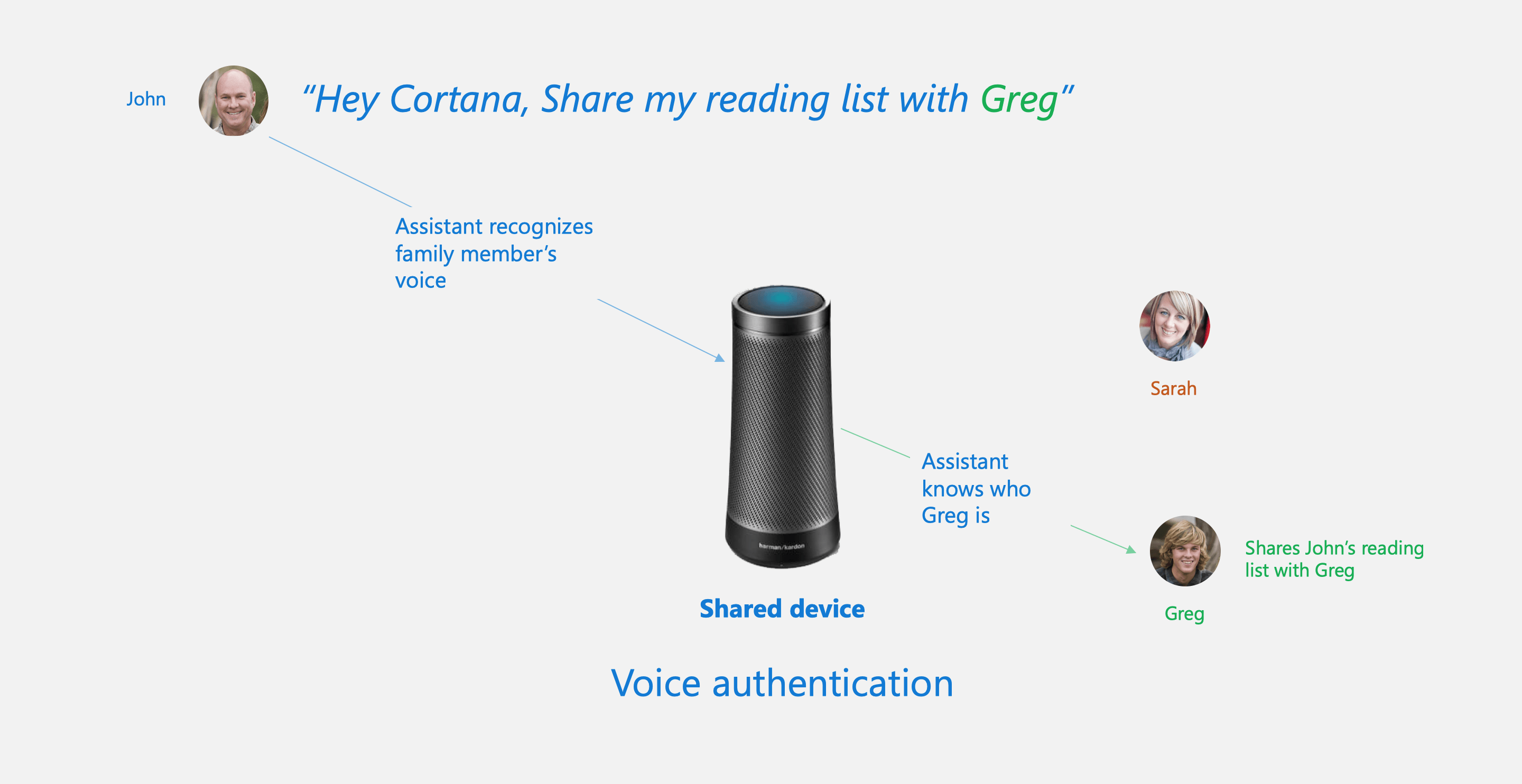

Voice authentication

A family at its core is a group of people that trust each other. For a shared family device, the assistant needs to understand the context of the conversation (where it is, what is being asked) as well as who is speaking to it. The team worked on enabling voice authentication for different scenarios. For example, when a user says ‘find my phone’ on a shared device like a smart speaker, the assistant recognizes the user and rings their phone accordingly. Voice authentication is especially important for a number of key natural language scenarios.

Find my phone

Share a list with a family member with voice

Due to the confidential nature of unreleased projects and products, much has been left out.